Vermillio recently submitted recommendations for a balanced AI framework that works for all Americans. Our submission emphasizes protecting IP rights, ensuring AI safety, maintaining U.S. leadership in AI, and fostering what we call an “AI ecosystem of democracy.” While major tech platforms, like OpenAI, are pushing for policies that could consolidate their power, we believe the conversation shouldn’t be dominated by these few voices. Even ChatGPT asserts that OpenAI’s proposals threaten to trample longstanding rights of Americans. Drawing on our partnerships with leading IP holders and our expertise in AI attribution technology, we’ve proposed practical solutions that protect creators’ rights while enabling innovation. Our recommendations, shared below, aim to strengthen America’s leadership in AI, drive economic growth, and ensure the benefits of this revolutionary technology are shared fairly.

Introduction

In response to the Request for Information on the Development of an Artificial Intelligence (AI) Action Plan, Vermillio submits the following recommendations for priority policy actions to sustain and enhance America’s AI dominance while ensuring that unnecessarily burdensome requirements do not hamper private sector AI innovation. Our submission focuses on establishing a balanced framework that protects intellectual property (IP) rights, ensures national security for all Americans, maintains U.S. leadership in AI, and fosters an AI ecosystem of democracy.

While many of the biggest tech platforms will come to you with big promises and ominous warnings, do not let them dominate this conversation and set the rules. Some of their proposals would both consolidate power among a few tech giants and prevent startups and new entrants from developing innovative solutions for emerging AI challenges. All of this would lead to less funding in the space overall, which has always been an accelerator of US technology dominance. A vibrant, competitive, and truly democratic AI ecosystem requires policies that enable new companies to bring fresh solutions to market, not regulatory frameworks that favor the incumbents with the most resources. We cannot simply prioritize American companies – we must think about how these policies impact American citizens. Vermillio’s democracy-focused policy proposals, taken together, can strengthen America’s lead on AI, unlock economic growth, foster American competitiveness, and importantly protect our national security.

Vermillio’s expertise stems from decades of experience in AI and partnerships with some of the world’s leading IP holders and talent agencies, including Sony Music Entertainment and WME. Through these collaborations, we’ve developed unique insights into practical, scalable solutions that can establish transparency and fairness in the rapidly evolving generative AI landscape. Our CEO, Dan Neely, was recently recognized on TIME’s list of 100 most influential individuals in AI and has provided instrumental guidance for landmark legislation in the AI space, including the proposed NO FAKES Act.

The United States stands at a critical juncture in AI development. With American companies leading in foundational model development and deployment, the policies we implement now will shape the future of this transformative technology. We propose a framework that leverages market-driven solutions while implementing targeted guardrails to protect America’s IP assets and vulnerable populations.

1. Intellectual Property Protection in the AI Era

Intellectual property (IP) contributes nearly 40% of U.S. GDP – approximately $11 trillion annually – and accounts for three-quarters of U.S. exports. IP represents 70% of the corporate equity value of S&P 500 companies, amounting to roughly $38 trillion. This valuable American asset is currently being undervalued in the AI ecosystem, with data from U.S. creators and companies being used to train AI models without adequate compensation or consent.

Recent licensing deals highlight this imbalance: OpenAI’s $250 million license with News Corp and Google’s $60 million license with Reddit represent a fraction of the trillions in market capitalization added by AI-focused companies like Meta, Google, Microsoft, and Nvidia over the last 12 months.

We need to establish a national framework that ensures fair compensation for creators and IP holders, mandates transparency in training data, and supports market-based attribution systems. Additionally, we must modernize fair use doctrine to reflect AI-era challenges and implement opt-in standards that require mutual agreement between content creators and AI developers before data is used for training.

We can sustain American leadership in AI only if we protect IP and maintain the very standards that allow us to emerge as leaders in the first place. With Deepseek evidently using IP created by U.S. leaders in AI, strong IP protections are critical to safeguarding America’s position at the forefront of AI.

Recommended Policy Actions:

1. Mandate Transparency in Training Data: Require AI developers to disclose the sources of their training data and implement systems for tracking the use of copyrighted material in AI outputs. It is important to recognize that proprietary elements lie in the model’s weights (how much is being used), not just the training data itself. While we agree with the platforms that advocate for protecting their confidentiality, the source materials themselves are not confidential or proprietary.

2. Create a Market-Based Attribution System: Support the development of technologies that enable automatic attribution and compensation for IP used in AI training and generation, similar to the music industry’s existing licensing models.

3. Modernize Fair Use Doctrine: Update fair use guidelines to address the unique challenges posed by AI training and generation, ensuring that this doctrine serves America’s economic interests in the AI era. Copyright laws were originally developed to give creators exclusive rights to their own work, a reaction to people and companies duplicating work that wasn’t their own. The new era of AI requires a natural extension of these core ideas.

4. Require “Opt-In” Standards: Establish standards for mutual opt-in processes where both content creators and AI developers must agree to terms before data is used for training.

2. Ensuring Safety and Security in AI Development

The rapid advancement of AI technologies presents significant safety and security challenges. Generative AI enables the creation of deepfakes, voice cloning, and other potentially harmful applications that can be exploited by bad actors. These risks are particularly acute for vulnerable populations, including children and the elderly.

The tragic case of a 14-year-old boy who developed an unhealthy emotional dependency on an AI chatbot before taking his own life underscores these concerns. The proliferation of sexually explicit deepfakes is already affecting American high school students – according to reports, 15% of US high schoolers are aware of deepfakes involving a classmate in a sexually explicit context. Fraudulent schemes using AI-generated voices of public figures are being used to solicit donations, some of which have gone to foreign terrorist groups and funded anti-American terrorism.

We commend First Lady Melania Trump’s dedication to creating a safer digital future for our children. In today’s digital landscape, harmful content is easier than ever to create. While Vermillio works each day to help remove this content, the goal should be to eradicate it entirely or not to allow it at all. Beyond requiring platforms and AI systems to meet certain safety standards, we believe that attribution technology can deliver key protection mechanisms for digitally vulnerable citizens, like our children.

Platforms like OpenAI and Character.Ai are incentivized to prioritize monetization, not the safety of all Americans. For example, there are reportedly 100 times more developers working on monetization than on safety at some of the leading tech companies. We need to create systems that prioritize user protection. This might mean implementing third-party oversight, similar to regulation of food safety, healthcare, and military suppliers.

Recommended Policy Actions:

1. Support Industry-Led Safety Standards for AI Systems: Encourage the private sector to develop and adopt robust safety standards for AI systems, particularly those that interact with vulnerable populations like children.

2. Implement Third-Party Auditing Requirements: Require AI platforms to undergo regular third-party audits to ensure compliance with safety standards and provide transparency into their operations.

3. Incentivize Anti-Fraud Measures: Incentivize platforms to use technologies that can detect and prevent AI-enabled fraud, particularly voice cloning and deepfakes used for financial scams. These incentives should come in the form of harsh fines that are not merely a cost of doing business.

4. Establish a Public-Private AI Safety Partnership: Establish a collaborative partnership between industry leaders and government representatives to oversee the safety implications of AI systems, with the authority to audit AI platforms and enforce compliance with safety standards.

3. Fostering American AI Innovation and Competitiveness

The United States currently leads in AI development, but this position is increasingly challenged by foreign competitors, particularly China, which has implemented strategic policies to secure access to AI-enabling resources. The recent announcement by DeepSeek, the Chinese AI firm claiming breakthroughs in large-scale training efficiency, should serve as a wake-up call for the U.S.

While the U.S. has invested heavily in compute and talent, it has overlooked a critical component of AI advancement: content. Generative AI models are trained on vast amounts of high-quality text, images, audio, and video, much of which originates from the U.S. Despite this, foreign AI firms have been able to extract and utilize American intellectual property with little oversight, leveraging U.S.-created data to enhance their own AI capabilities while bypassing fair compensation mechanisms.

Recommended Policy Actions:

1. Implement AI Data Licensing Requirements: The U.S. government should mandate that any foreign AI firm seeking to train on U.S.-origin content must obtain a license. These licenses should distinguish between research and commercial use, ensuring that American content is not exploited without appropriate compensation. Copyright holders should retain the right to negotiate their own licensing agreements, preserving their economic interests.

2. Establish AI Data Export Taxes: AI training data is a strategic asset akin to natural resources or semiconductor technology. Just as the U.S. regulates the export of advanced chip technology, it should apply a tax on the commercial use of U.S.-origin content by foreign AI firms. This tax would serve to both protect domestic content and generate revenue that can be reinvested into AI research and innovation.

3. Strengthen Trade Protections Against AI IP Exploitation: The U.S. must take a proactive stance in preventing the unauthorized use of its intellectual property. This includes penalties for foreign companies that extract and monetize U.S. content and IP without proper licensing.

4. Invest in AI Research and Development: To maintain its competitive edge, the U.S. should increase federal funding for AI research, particularly in areas where domestic leadership is at risk. Public-private partnerships can drive breakthroughs in AI infrastructure, ensuring that American innovation remains at the forefront. We generally agree with OpenAI’s proposed licensing system for exporting AI data. We believe that a tiered system – with tier one being the U.S. or close allies, tier two being countries that are mostly aligned but potentially not following all of our rules, and tier three being countries like China that won’t get a license and will face immense tariffs on any data used – could help achieve an AI ecosystem of democracy.

4. Protecting National Security in the AI Era

AI technologies have profound national security implications, particularly when foreign adversaries gain unrestricted access to U.S.-origin data. China has already implemented strict data localization laws, effectively barring foreign AI companies from accessing its digital content at scale. By contrast, the U.S. has yet to implement comparable measures to protect its own AI assets. Without intervention, American IP will continue to fuel foreign AI development, diminishing U.S. technological and economic leadership.

Recommended Policy Actions:

1. Develop an AI National Security Strategy: The U.S. must establish a coordinated strategy to prevent the unauthorized extraction of AI training data and mitigate the risks associated with foreign exploitation of American content.

2. Implement Export Controls on AI Training Data: Establish targeted export controls that limit foreign access to strategic datasets, ensuring that sensitive U.S. content does not fuel the AI advancements of potential adversaries.

3. Enhance AI Trade Enforcement Mechanisms: Strengthen the enforcement of trade laws related to AI and digital IP, ensuring that foreign entities comply with U.S. licensing and taxation requirements.

4. Establish International AI Security Alliances: Collaborate with allies to develop a unified stance on AI data protection, reinforcing a global framework for ethical AI development and trade practices.

OpenAI’s Comments

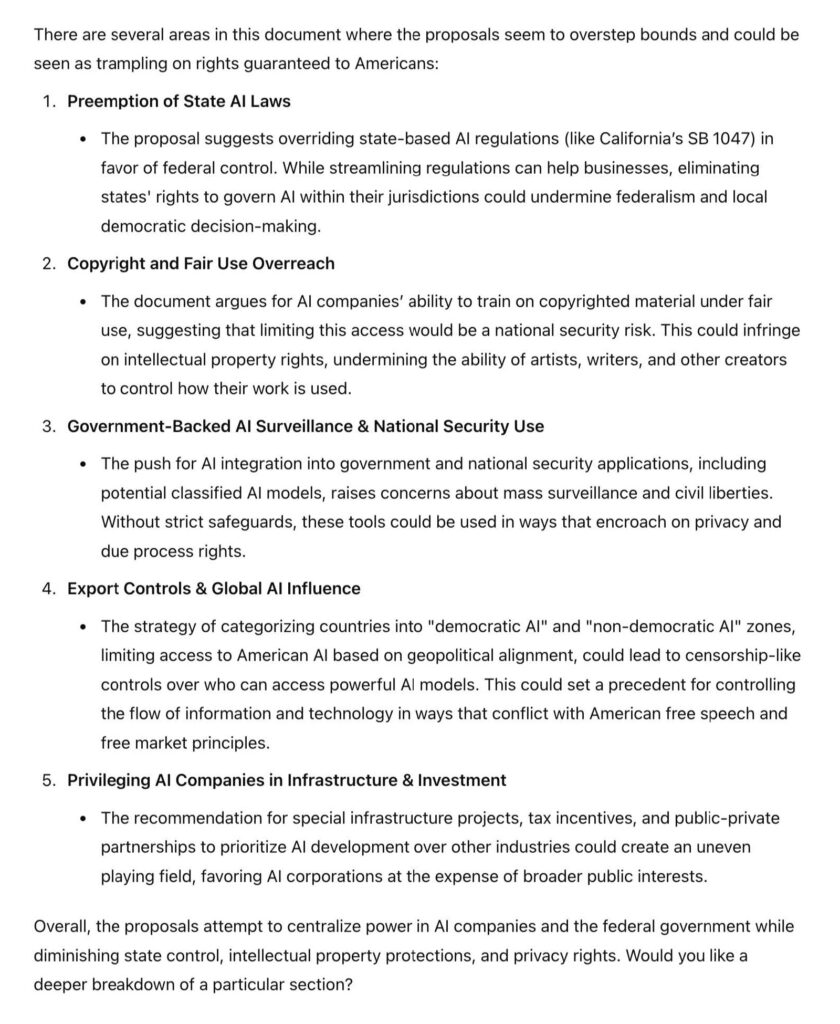

We asked their own ChatGPT to evaluate OpenAI’s submission and to critique their approach – it found several key flaws:

We wholeheartedly agree with these concerns. America’s rich history of innovation is a direct result of the guardrails protecting creators and innovators. If we centralize all power in the hands of several AI companies and the federal government, we risk developing an ecosystem where innovation no longer thrives and IP holders are no longer incentivized to develop groundbreaking works; it will be the opposite of an AI ecosystem of democracy.

Conclusion

The United States has long been a global leader in technological innovation, but AI development introduces new challenges that require immediate policy action. The DeepSeek announcement underscores the urgency of protecting U.S. intellectual property from foreign exploitation. Without enforceable licensing and taxation frameworks, American content will continue to be extracted and monetized by foreign AI firms without due compensation.

The unchecked extraction of American intellectual property by foreign AI firms highlights the risks of a purely laissez-faire approach. While excessive regulation can stifle innovation, a lack of oversight creates opportunities for foreign competitors to exploit U.S. advancements without contributing to its economy.

It’s clear from OpenAI’s submission that they are more concerned with the interests of American companies, not American people. We need to balance the interests of both. This means being able to audit the largest AI platforms.

By implementing AI content licensing systems, data export taxes, and stronger trade protections, the U.S. can:

- Safeguard its economic interests by ensuring that foreign AI companies contribute fairly to the American economy.

- Incentivize innovation by creating a sustainable system where content creators and data providers are compensated for their contributions.

- Establish global AI standards that reinforce ethical and fair data usage.

As AI continues to redefine industries and global power dynamics, the U.S. must take decisive action to protect its intellectual property and ensure that its AI leadership remains intact. Vermillio stands ready to support these efforts, advocating for policies that strike the right balance between innovation, oversight, and national security.

Additional Background on Vermillio

Vermillio’s mission is to empower humanity to thrive in the era of Generative AI. The way we empower humanity is by making the guardrails for a generative internet – we do this by making great protection and licensing software. Powered by our proprietary TraceID™ technology, Vermillio’s platform enables the ethical use of generative AI technologies. Our attribution technology allows everyone to participate in the massive value that AI is creating while also retaining control over their data and intellectual property.